I’m writing this on the train to Birmingham* as I travel back to Cambridge with some of the AI Narratives team (Dr Stephen Cave, Dr Kanta Dihal, and Professor Toshie Takahashi) after our stellar performance at the Science in Public Conference in Cardiff. We presented on our work on the perceptions and portrayals of AI and why they matter (our report on this with the Royal Society is here), highlighting the tensions in those narratives and how they differ in different regions, such as Japan.

While waiting for the SiP conference dinner to start last night I spent a little time on Twitter observing conversations around AI, and I came across an interesting example of an AI conspiracy theory that really highlighted my thinking about our panel and about some of the other panels on AI at SiP – this is the story of the death of 29 Japanese Scientists at the hands of the very robots that they were building, an event that has allegedly been hushed up by the ‘authorities’.

My paper for our AI Narratives panel was on “Elon and Victor: Narratives of the Mad Scientist as applied to AI Research”, and I explored the presumed synergy between Mary Shelley’s story and its two main characters and current aims and aspirations in AI. In the past year, the 200th anniversary of Frankenstein’s publication, I have been asked to give four talks on AI and Frankenstein (including one for the 250th anniversary of my new college Homerton, which has a link with Shelley through her father, William Godwin, who was refused admission to the original Homerton Academy on suspicion of having ‘Sandemanian tendencies’, ie being a part of a Christian sect with particular non-conformist views of the importance of faith). I use examples from the media and popular culture where AI is presented as Frankenstein’s creature, and for this paper, cases in which the AI influencer (if not exactly AI scientist) Elon Musk is portrayed as Victor Frankenstein – either positively or negatively – and how that depiction recursively interacts with the public perception of AI research, as shown in tweets about him, but also in concerns about what these ‘AI experts’ are up to.

Another paper at the conference also tackled the ‘AI expert’, with a rather negative account both of who can even be an expert, as well as a dismissive attitude to anyone speaking about ‘AI’ as it ‘does not exist yet’. To my mind this was a No True Scotsman argument (or perhaps, since we were in Cardiff, a No True Welshman argument) as the speaker did not accept either aspects of AI research such as machine vision research as real ‘AI’, nor those working on this technology as credible AI experts. AI in this conception I think was much closer to AGI, and thus everything before that point was not real AI and therefore not worth worrying about in apocalyptic terms. Their concluding statement was to that we should chill and stop spreading hyperbolic concerns about AI as its just not here yet. Particular criticism was aimed at ‘AI Experts’ working outside of their usual expertise – so Hawking, Kurzweil, Musk et al – and giving us apocalyptic narratives about the future of AI. As AI, in this definition, doesn’t exist yet these experts were dismissed on two accounts – as speaking outside of their wheelhouse and about something that wasn’t even real.

Returning to the ’29 Scientists’ conspiracy theory. I spotted this and was fascinated by it as an example to link together some of my thoughts around my paper, our AI Narratives panel, and this other paper. First, the story. This is a link to the original speech given by Linda Moulton Howe. She is a ufologist and investigative reporter who began her career writing about environmental issues before focusing on cattle mutilations with the 1980 documentary, Strange Harvest, which received a regional Emmy award in 1981. In the field of UFO thinking, she is an expert and her journalist past and award give her authority.

This talk was given at the Conscious Life Expo in February 2018, and in it she refers to the death of 29 Japanese scientists at the hands of their own creations in the August of the previous year. She claims that this disaster was hushed up and that a whistleblower had come to her to share the truth. This specific section from her overall talk on the dangers of AI was posted on Youtube on 14th December with an added frame that said the event had happened in South Korea, even though she clearly says Japan. In tweets since 14th December about the ’29 Scientists’ the details do not vary very much, apart from that mis-location. 29 Japanese scientists were working on AI in robotic forms, were shot by ‘metal’ bullets from them, the robots were destroyed, one of them uploaded itself into a satellite and worked out how to rebuild itself ‘even more strongly than before’. In the talk she highlights her fears about AI not only with this whistleblower’s story, but also with clips and quotes from Musk and Hawking. Her position is obviously that they are very much the voices we should be listening to.

Who is allowed to be an ‘AI expert’? This was the question that the other paper at SiP got me asking. I recognized in my own paper that Musk is not directly working on building AI (and Hawking certainly wasn’t), but that he funds research into avoiding the risks of badly value aligned AI (as described in the work of Nick Bostrom). He has links with CSER and through them to the CFI, where we are considering AI Narratives. Hawking spoke at the launch of the CFI itself, expressing his concern that AI could either be the best or the worst thing to happen to humanity. Are these voices and others experts? Is anyone an expert if they come from other fields? As a research fellow and anthropologist I consider myself an expert on thinking about what people think about machines that might think, but could I be summarised as an ‘AI expert’ – I certainly have been, but I make no claims to be building AI! While not on the scale of a Musk or a Hawking, I still think my perspective has value and even impact (I do public talks, make films, offer opinions). Perhaps it shouldn’t? But then valuable thinking from anthropology, philosophy, sociology, etc would be lost.

With Musk et al, the much better known ‘AI experts’, I argue that there is a Weberian form of charisma being exhibited. It might not entirely rest within them – although Musk certainly has a large following on social media who react to him and his work on a personal level – it might also be thought to lie within the topics that they discuss. The authority they have in other science and technology fields also lends legitimacy. Weber schema for legitimate authority was rationality-tradition-charisma. While work in AI safety has internal legitimation through claims of rationality (Bostrom as a philosopher is a prime example of this, as well as the wider rationalist eco-system including sites such as LessWrong which I have discussed elsewhere), I would argue that the public statements of Musk and others also rely on charismatic authority – the ideas and people speaking are affectively compelling.

Further, I would suggest that a much longer history of apocalyptic thinking, a ‘tradition’, underlies this discourse, and certainly when such stories as ’29 scientists’ are discussed in conspiricist circles their reception is based upon a long (and linking) chain of such claims that map onto earlier models of apocalypticism (see David Roberton’s book on conspiricism for his discussion of ‘epistemic capital’, authority, and the nature of these kinds of ‘rolling prophecies’).

Prophecy also provides moral commentary: through claims about the future we can understand critique of the present. A lot of the negative comments about AI experts at the SiP conference was focused on prediction, and the idea that they were ‘wagering’ on certain futures and were likely to be proven wrong on specific dates for AGI (the example used was Kurzweil’s claims about the Turing Test being beaten by 2029). But prophetic statements made by Kurzweil (called a ‘prophet’ in the media on occasion) also expresses his critique of current society: if we are going to be smarter/more rational/sexier (!) post-Singularity, what are we now? While not a forward looking prophecy as the event was said to have happened in August 2017, the ‘29 Scientists’ story also contains moral commentary – the scientists are killed by their own creations, their hubris (like Victor Frankenstein’s) is something we need to learn from and avoid. The secrecy around the deaths and the role of state authorities in hushing up the truth is obviously a common trope in conspiracy theories, but we could also note a techno-orientalism as well (a narrative that Toshie discussed in her paper at SiP). That the scientists are specifically Japanese plays into some negative tropes about Japanese culture and it’s ‘too strong’ interest in robots. In Moulton’s talk it is clear that she wants everyone to pay attention to the moral of this story of the death of the 29 Scientists – ‘be careful what you wish for’ – but it is the Japanese scientists who pay the fatal price for their hubris, while the ‘rational’ (and charismatic) authorities, the Western scientists represented by Musk, Hawking etc, have been warning us about the dangers of AI and we just haven’t been listening.

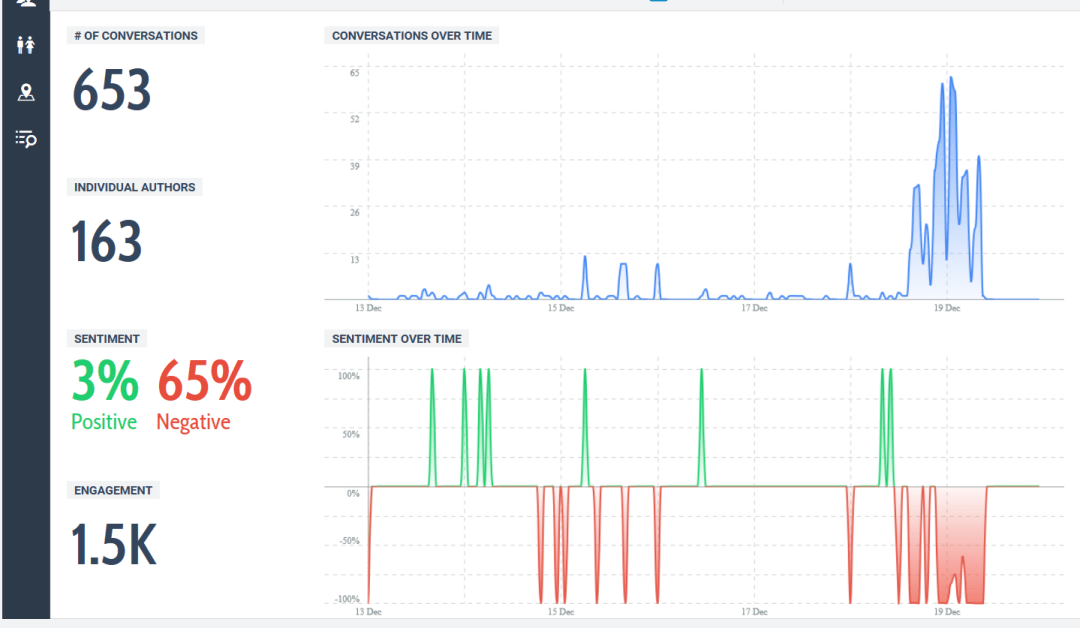

I’m tracking the spread of this story and seeing growing interest (both those who believe the story and those who are dismissive). I myself tweeted that I was doing this research, leading one person to ask if I was placing bets on its spread and then rigging the game by sharing the story! This is a perennial problem for the ethnographer – highlighting a culture or narrative can change it – making it more popular or even putting it under new pressures that lead to its demise (I’m looking at you Leon Festinger!). But I’ve taken a snapshot of the story and the conversations around it using a couple of different digital tools, so I can also note when/if my influence occurs:

This is not a huge number of interactions compared to many other viral stories. But I think its an interesting case study: it highlights the nature of the AI expert, who is believed and trusted, charismatic authority, conspiracy culture, AI apocalypticism, and techno-orientalism.

*I lied, my train is actually heading to London Paddington. See, you can’t believe everyone online 🙂

Thannks for posting this