The past weekend saw the Faraday Short course take place in conjunction with our subproject on the implications of developments in AI and Robotics. From Friday to Sunday members of the public could listen to invited speakers, engage in break out discussion groups, and even grill the speakers mercilessly during an evening panel!

Photo by Gavin M.

The short course went extremely well in terms of organisation and in the generation of conversations. Anyone seeking definitive answers might have been disappointed, but in part the course was addressing what a religious response to the questions raised about AI and robotics might look like – with, at this point, that response limited to Christian interpretations.

The Faraday Institute is very successful in bringing together those voices that we might initially think to be in opposition. Thus it was fruitful to note where those who were overtly secular in fact agreed with those who were religious. That was one of the main arguments of my own talk for the course: that future tech focussed groups in fact draw on their home-context of religious eschatology in the formation of their science ‘fact’ speculations about the future of AI and robotics. My two particular examples, drawn from conference papers I have given previously this Summer, were the pragmatic attempts at the development of a “Theism from Deism” and a Transhumanist Church, and the potentially ‘tremendum et fascinans’ implications of certain Singularity thought experiments such as Roko’s Basilisk.

A slide from a presentation by the Transhumanist, Giulio Prisco, from the 2014 Mormon Transhumanist Conference – presented during my paper at BASR 2016 earlier this month.

However, during the course it was interesting to see both Computer Scientists and Christian Theologians refer to belief in the personhood and/or intelligence of potential AIs as a “category mistake”, drawing in part from Ryle (1949), Searle (1980) and Kelly (1994). This dismissal came at the concept from two apparently distinct directions of the ‘secular’ and the ‘religious’, but they found agreement in their views during the course – or at least those that discussed this issue with me did.

However, I am convinced that there are another two issues that complicate this apparent certainty and agreement. First, and perhaps quite obviously, anthropormorphism makes this “category mistake” somewhat irrelevant. As another speaker noted, all we ever have from other humans is appearance of genuine intelligence – even emotions – to the extent that the speaker raised the possibility that their long time friends might in fact have been “acting out” the friendship. But should the speaker die without knowing this, then there would be no difference in effect than if they had been ‘genuine’ friends.

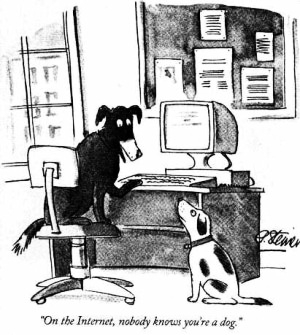

In some ways, as the lone social anthropologist – as far as I am aware – I felt like this was a conversation catching up with something participant observers have known for a while. I have perhaps been more aware of this issue, being a researcher in digital communities, and being regularly asked if I can believe anything that I am told by someone online – in particular about their religious belief.

My answer, and my answer if I was asked about the ‘genuiness’ of a robot’s response, has been to point out that we take things for granted in face to face conversations with humans as well. You tell me your name, how you are feeling… even what you believe. How do I know that you are telling the truth, or even further, that there is an intelligence seperate to me actually having these genuine experiences? We give benefit of the doubt with every human we ‘meet’ – even, or especially, online.

Second, as J. M. Bishop states in his 1995 review of Kelly’s 1994 book:

Kelly meticulously examines the claims, achievements and underlying philosophy of proponents of AI, highlighting the limitations of each theory, with the effect that reading the book is rather like being confronted by a slow moving traction engine. By the end only the most religious practitioners of AI will have moved to apostasy.

In this statement Bishop intends to highlight Kelly’s successful argument, but he accidentally draws attention to what I see as the second issue in relation to the ‘category mistake argument’. There is a strong element of the religious to a continuing belief in AI as potentially becoming a genuine person. That religious, and particularly eschatological, influence was what I wanted to bring attention to in my paper. And Bishop himself is obviously not immune to that influence: he refers to the “most religious” and those who have (rightly in his mind) moved to “apostasy”.

Even overly secular responses to AI and robots draw on the religious context – primarily the Western Christian understanding of the shape of the future and of what a supreme intelligence or being might look like – in their discourse. Paying attention to this influence is key, as I said in my paper, because this shaping by religious eschatology can underlie claims about the future of AI, make certain individuals influential (or even charismatic), and be used to generate interest in funding applications for particular technological developments (see Geraci, 2010). As a New Religious Movements scholar this aspect of developments in AI and robotics particularly interests me, and will form the basis of my continuing research in this area.