I hope that you’ve never experienced the Spinning Wheel of Death. But if you are a Mac user you may have had that gut wrenching moment of despair when everything freezes, the app you are using judders to a halt, and all you can do it watch, hypnotised by the rainbow wheel that spins, and spins and spins…

What’s making me think about this little beach ball of evil this sunny morning? I saw on my Twitter feed that in March TechEmergence published the results of interviews with 40 AI experts on what they think is the most likely AI risk in the next 20 years.

TechEmergence have displayed this data in a rather neat spinning wheel inforgraphic made up of 33 of the researchers and their bio pics, grouping them and some of their one-liners into seven categories of risk (you can subscribe to be sent the complete data set as a spreadsheet, which also includes longer term predictions). These categories are:

- None Given (6 researchers)

- Malicious Intent of AI (1)

- General Mismanagement of AI Technology (5)

- Superintelligence (2)

- Surveillance/Security (3)

- Automation/Economy (12)

- Killer Robots (4)

The respondants are a mix of technological experts and more philosophically inclined researchers like Dr Peter Boltuc.

Ignoring the fact that this wheel of predictions could easily be submitted to the Congrats, You Have an All Male Panel! Tumblr (I found Joanne Pransky hidden away in the data set but not on the infographic), I have a few issues with this research and its presentation.

First, the coding. In particular the category of ‘None given’ for answers on risk like:

“The revelation that human minds may not be as wonderful as we all thought, leading to the inevitable humiliation and denial that accompanies significant technological breakthroughs.” (Dr. Stephen Thaler)

This kind of existential risk might be more worrisome than just assuming Humanity will go through some sort of ’emo’ phase as its place in the ‘centre’ of the universe is displaced. Responses could be far more significant, including but not limited to: putting new technology to use in new religious narratives as this once stable category (our singular superiority) is displaced. If you don’t think the emergence of these narratives can be significant consider the impact of long distance communications (ie the telegram and Morse Code) on the emergence of spiritualist ideas about our ability to communicate with an ‘afterlife’. This communication might be an idea that you do not subscribe to, but it has had an impact on society, around the world, since the 1900s. Not least in the development of the New Age movement and in the emergence of specific New Religious Movements that aim to connect their believers with an afterlife.

“The biggest risk is some sort of weaponization of advanced narrow AI or early-stage AGI. This wouldn’t have to be killer robots, it could e.g. be an artificial scientist narrowly engineered to create syntheticpathogens— or something else we’re not currently worrying about. Human narrow mindness and aggression, aided by AI tools that are more narrowly clever than generally intelligent or conscious. That’s what we should be worrying about, if anything. Fear of advanced AGI is mostly just generic “fear of the unknown”, and tends to be based on insufficiently deep thinking about the open-ended nature of intelligence.” (Dr Ben Goertzel)

Again, here’s a whole bunch of risks. The distinction between narrow AI and advanced AI is a key one to make, and not one expressed by TechEmergence in its introduction or framing of this work. Clarity on such terms matters: words impact the conceptions people have of this risk that TechEmergence is trying to give a picture of. Likewise, coding military applications of AI as ‘Killer Robots’is leading, and also not a fair description of comments like:

“Intelligent drones bringing military competition to the next level.” (Dr. Peter Boltuc)

The ‘Killer Robots’ trope owes a fair deal to films like Terminator – images from which are regularly wheeled out in articles on Artificial Intelligence because, according to a journalist I spoke to recently about this, a “sense of narrative investment, if not baggage, is valuable, I think. Otherwise the robots can feel inert”. TechEmergence however makes claims to some neutrality: in the dataset is a page of disclaimers, including:

“It’s remarkably important for me to make it clear that I am neither a techno-pessimist or a techno-optimist. In general, we don’t pursue interviews or ask questions to garner “cool” or “futuristic” answers. Rather, our aim is to get a legitimate lay of the land from researchers and experts who know what they’re talking about. TechEmergence exists to proliferate the grander conversation rather than to push a particular set of beliefs or predictions.”

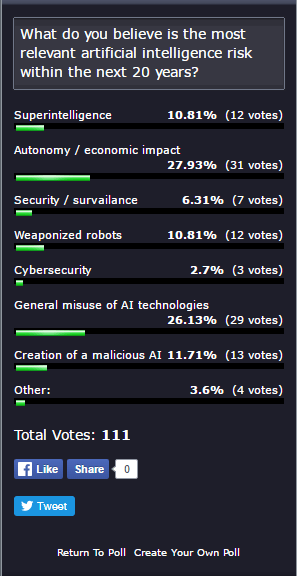

But words have impact. The Spinning Wheel of Death (see, this post has biased you to thinking these researchers are all doom-sayers…) rests above a survey for readers, dividing risk again into categories, with ‘None given’ replaced by ‘Other’ with a write in box for responses. So far there have been 111 responses:

This survey does not directly match the categories applied to the AI researchers’ answers. We can note that ‘Killer Robots’ has been replaced with ‘weaponized robots’, and ‘Cybersecurity’ seems to be in the place of ‘Malicious Intent of AI’, although these are hardly equivalent. It would also be interesting to see what was written in for those ‘Other’ answers.

I think what most drew me to the Spinning Wheel of Death is that – in words that its creator uses when discussing the neutrality of TechEmergence on the subject – is that its about beliefs and predictions. The voices involved are key figures in the consideration and development of AI, so they speak from experience. But we don’t really know what risks lie ahead, this is crystal ball gazing.*

And I find that extremely fascinating. Not quite Spinning Wheel of Death hypnotising perhaps. But remember, that ball only appears when someting has gone wrong with our advanced tech, something perhaps unpredictable. Just like AI.

*Another spiritual ‘technology’ influenced by real world developments in communications tech at the time

This comment hardly denies risk, it just assumes that they can be combatted by other technologies. Technologies perhaps with their own inherent risks…