Recorded with the Bunker podcast before the latest, and awful, evolution of X, we also get into GenAI in education and society as a whole:

“Who needs a priest when you have a chatbot”, Opinion, Simon Kuper, Financial Times

I am happy to have been mentioned in this opinion piece by Simon Kuper, who I had the pleasure of meeting last month at Faith Angle Europe 2025 forum, Cap-du-Ferrat, France

https://www.ft.com/content/afa543a8-6d5f-4990-8efa-31c2f888edc0

“Why some people are treating ChatGPT like a God – and what that means for the future of faith” – Becca Caddy, Tech Radar

Fantastic new piece by Becca Caddy, including interview with me 🙂

“I’ll be back” – 40 Years of the Terminator, BBC Radio 4 Documentary

Presenter: Prof Dr Beth Singler

Producer: Mark Burman

@Compi do they still dance on Zintia?

“Well, we’re nearly there! So, how are you feeling about it? It being your first case and all.”

“No bad, not bad. I’ve read some of their literature. I think there’s some things that we can use there, definitely! Yeah, so… so maybe I’m actually pretty optimistic!”

Her superior’s smile was thin and wane. “Great. That’s really great.” He floated back to another console and studied some outputs with feigned interest. “I mean… it almost always goes wrong, but it’s good that you’re feeling confident.”

Her own smile died on his face. “But you’ve got to some of them, right. Before?”

“Got the highest success rate in the Commission!” He gloated.

“I read about it in the training manual. Five, isn’t it?”

His third and fifth limbs shuddered, showing his annoyance. “Well, four and a half. The Zintians were lost about fifty years after I was there. But like I keep telling the Board, we’ve got to leave someone behind! Keeping a lookout for danger!” Grontich grumbled, “But they never listen. Too few Commissioners, too many planets. The usual guff!”

Clae nodded, as though she hadn’t heard him pontificating on what was wrong with the Board several times so far on this trip. But this was the first time that he’d even asked her what she was feeling about the job. The first time he’d really shown much of an interest in her as a Junior Commissioner rather than just another multi-body floating about their small craft as it hopped its way towards their objective.

“Would you have stayed? With the Zintians, I mean?”

Grontich looked around at her, three of his eyes blinking slowly like one of those ‘uwls’ she’d seen in the ‘Other Sentiences’ part of the file.

“Ugh. Well maybe. They had a fun form of music. Something like a cross between Mayha and Rheshae. Good beat, fun to dance to”.

She tried to keep her upper skin tones neutral to hide her amusement and shock. She just couldn’t imagine her supervisor dancing!

Grontich glared at one of her symbiotes who was less polite and was reflecting her carefully obscured response. A dark cloud flit across his face, and she thought he was going to give her a reprimand, but then he just looked sad. “But of course, there’s no more of that anymore. No more dancing on Zintia” He shuffled one of his own symbiotes back into line with the others and spoke again in a clipped tight tone. “How long till landing?”

Clae assessed some numbers and looked out the stars. “Four more hops. We’ll be there in time for their evening meal”.

“Landing spot?”

“A northern continent. According to the scouts it contains some political importance.”

“Oh good, another fractious planet.” He sighed, “Are they at least an Ur-Democracy?”

Clae shifted uncomfortably, some of her symbiotes reflecting her irritation before she shut them down. He was either testing whether she’d done her job, or he’d not bothered to read the packet they’d both been given. But then, she was just the Junior Commissioner.

“Not yet. They’ve acquired the basic model. Not even universal.”

He paused, “And I guess a ton of international conflicts as well?”

“Yes-” She hesitated.

“Oh good, good, that makes a galactic message of peace and friendship so much easier to share!” He started angrily pocking and prodding at buttons and dials, “And they gave you this one?! As your first case?!”

She was quiet for a little too long.

“Oh, that’s just kvalling great!”

Clae was shocked at his swearing, but tried to hide her response with busywork, “Maybe we should check the calibrations on the wave net? Get it super refined?”

“Why? They don’t have to be very micro to catch the signs?”

“Its just… I was rereading about the Poovi the other day-”

“And wasn’t that a just a grand old time!” Grontich sniggered. That hadn’t been one of his commissions.

“But don’t you think the wave net could have made a difference if it was calibrated closer to 3 to 4 micro-lelems?”

“Careful, Clae. You start trusting the tools over your own senses and intuitions, and you’re on a slippery slope to washing right out of the Commission before you graduate!”

“I- I don’t!” She stumbled over her words. “Obviously I’m going watch and observe. Its just if we’d been checking the Poovi’s comms more closely-”

“Sure. And if wishes were farden, tyack would ride!” Grontich bellied laughed, twice for each stomach. “Wave nets are nice for reports, but you’ll know. With the Poovi, well, Amdna is a kvalling idiot is all!”

Clae chose not to respond to his reprimand of Amdna, who’d been one of her trainers at the Commission. Thankfully, the blue green planet was in view now, the last hop brining them above one of the larger continents.

“Pretty”, Grontich admitted. “And breathable air. Nice.”

Which he would have known if he’d read the packet, Clae thought angrily. Breathable air. Bipedal species like them. A little less in the diversity of forms than them, and no symbiotes at the visible scale (she briefly wondered if their microscopic internal ones would be open to communication too?), very social if also prone to international conflicts. And at about the right age for it all to go very very wrong.

“Landing site?”

“A park in the middle of a second order city. Prominent enough to get attention, but not a primary war target so they might give us a moment to chat first. Likely to be a mixed audience of grown ones and young ones.”

Grontich nodded. “Good choice” He was fiddling with something at the back of the cockpit. “Okay, we go in five bisschens. Ready yourself.”

***

Clae reflected that her first Commission could have gone better. Of course, the worst-case scenario in her head had involved deflecting some form of projectile weapons with her force shield and high-tailing it back aboard before writing up a sadly thin report about their failure with the humans.

They did get through their message of peace relatively easily. Clae had given it in halting English, her constant practice with Grontich during their journey hopefully making her sound somewhat close to a local. And they did seem to understand it.

They were wide eyed and staring, but there was little fear on their faces. Some even smiled! Until the warning, then there was some frowning.

But she was only part way through that when the wave net sounded an alarm. Something was going in and out of the small devices that almost all but the smallest of them were holding and pointing towards the visitors to capture images. Inputs and outputs.

Grontich had been right. She didn’t need the wave net’s outputs to know what had already happened here. What had also ended up happening on Zintia and with the Poovi.

The wave net caught their communications with their devices and shared them with Clae and Grontich.

“@Compi is this a real alien?”

“@Compi shld we believe them about they come in peas”

“@Compi what would a half human half alien baby look like if one of these was the mother”

“@Compi should I go to their planet? Is it nice”

“@Compi what should I get for dinner tonight?”

It was already too late. The humans would not make it past the Great Filter. Well not all of them.

Clae looked at the twenty small capsules Grontich was fussing over, ignoring his wounds from the humans who had not liked the stranger running through them and grabbing their offspring to beam them into their ship’s host and symbiote life pods.

“I don’t remember kidnapping in the training manual…”

“Shut up trainee!” Grontich snapped, “Hop us back, I don’t know how long human children can be frozen for!”

Clae smiled and put in the coordinates for home. Calculations done in a moment in her head, as she nodded along to some old music track Grontich put on while he fussed around his stolen goods. Something good to dance to, from Zintia, before it fell.

NOTES

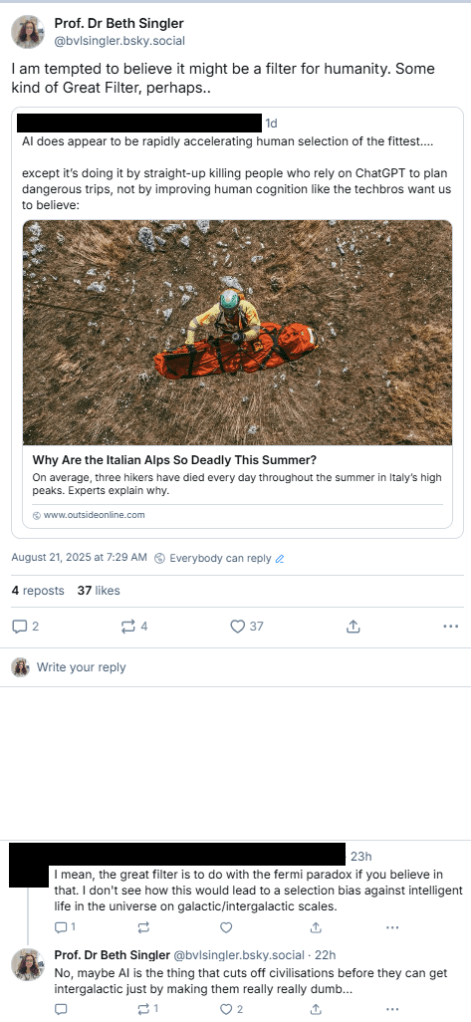

Recently, I’ve been working on a new article about GenAI Professors, and getting into recent, and quite negative, discussions about AI as an ‘epistemic carcinogen’ (Berman 2025) or even the cause of an ‘Epistemicide” (van Rooij 2025). Then I responded to a piece about people using chatbots for information about hiking and how that might be a form of natural selection. Add in some Great Filter theory…

… and then we get the above, a short little story about warnings that come too late.

“Religion and AI: An Introduction” Wins!

A little bit of good news!

“In the category of books for professionals and educators, the prize goes to Beth Singler for Religion and Artificial Intelligence: An Introduction (Routledge, 2024).”

“AI, ethics, and human identity: the new religious frontier” – The Religion and Ethics Report, ABC Australia, July 2025

New Interview!

https://www.abc.net.au/listen/programs/religionandethicsreport/ai-and-religion/105590780

“As the Vatican seeks to harness social media to spread its message, others are warning that artificial intelligence poses a huge challenge to all religion.

Could AI even be a rival to faith, projecting itself as a source of wisdom that’s neither human nor divine?

Professor BETH SINGLER of the University of Zurich is the author of the new book, Religion and Artificial Intelligence.

GUEST:

Professor Beth Singler – Assistant Professor in Digital Religions at the University of Zurich”

1525 AI Project, University of Zurich

Announcing the 1525 AI Project!

This is a website that myself, Dorothea Lüddeckens (project co-lead), Andi Gredig (promotion), Bruno Moreschi and Bernardo Fontes (machine learning), Valérie Helbling (research assistance), and a variety of UZH scholars in the history of religions, have been working on, for a special celebration this year at the Faculty of Theology and Religious Studies here at the University of Zurich. This project is supported by the Faculty and the URPP Digital Religion(s) as a part of my project, “Post-AI Religion: The Reciprocal Disruption of Digital Religions and AI”:

INTRO: “For the 500th anniversary of the “Prophezy” we at the Faculty of Theology and the Study of Religion of and the URPP in Digital Religion(s) at the University of Zurich have explored how religious objects from 1500-1600 are seen through several different kinds of eyes: those of humans – a child, a teenager, and religious experts –and the digital ‘eyes’ of Artificial Intelligence. You might be surprised that AI actually sees in probabilities, especially if you’ve already experienced chatbots that can describe images – but it chooses those words based on probabilities, which is why some people will say it ‘hallucinates’ its answers rather than really ‘seeing’.”

More publicity for the site will follow over the Summer and the rest of the year, but here you can take a look!